The growing wave of verdicts against social media companies marks a significant turning point in how governments, courts and societies view the power and responsibility of digital platforms. Over the past decade companies like Meta Platforms, Google and X have transformed communication, commerce and even politics. However as their influence has expanded too has scrutiny over their practices—ranging from data privacy violations and misinformation to monopolistic behavior and harmful content.

Recent verdicts in courts across the world have begun to impose consequences on these social media companies. These rulings are not about penalties or compensation but also about redefining accountability in the digital age. Yet despite these developments many complex questions remain unresolved creating a future for both the social media companies and their billions of users.

### Background: The Rise of Social Media Power

media platforms were initially celebrated as tools for connection, democratization of information and empowerment of individuals. Platforms like Facebook, YouTube and Twitter allowed users to share ideas mobilize communities and access conversations in real time.

However this rapid expansion came with consequences:

* Spread of misinformation and fake news

* Data privacy breaches

* Algorithm-driven echo chambers

* Harassment and harmful content

* Influence on elections and public opinion

For years these social media companies operated in a relatively lightly regulated environment. Laws struggled to keep up with advancements and many governments adopted a “hands-off” approach to encourage innovation.

### Landmark Verdicts and Their Impact

In years courts and regulators have started taking stronger action against social media companies. These verdicts generally fall into categories:

#### Data Privacy Violations

Cases involving misuse of user data have been among the most prominent. Courts have ruled against media companies for:

* Collecting data without proper consent

* Sharing user information with parties

* Failing to secure data

These verdicts have led to massive fines and stricter compliance requirements. For instance regulations like the EU’s GDPR have forced social media companies to rethink how they handle user data globally.

#### Content Moderation Failures

Courts have increasingly held media platforms accountable for harmful content including:

* Hate speech

* Terrorist propaganda

* Cyberbullying

* Disinformation

While social media platforms argue they are intermediaries and not publishers courts in some jurisdictions have challenged this claim especially when algorithms actively promote content.

#### Antitrust and Monopoly Cases

Governments have accused social media platforms of abusing their dominant positions. Legal actions have focused on:

* Anti-competitive practices

* Acquisitions aimed at eliminating competition

* Preferential treatment of their services

These cases could potentially lead to structural changes, including breaking up large tech companies.

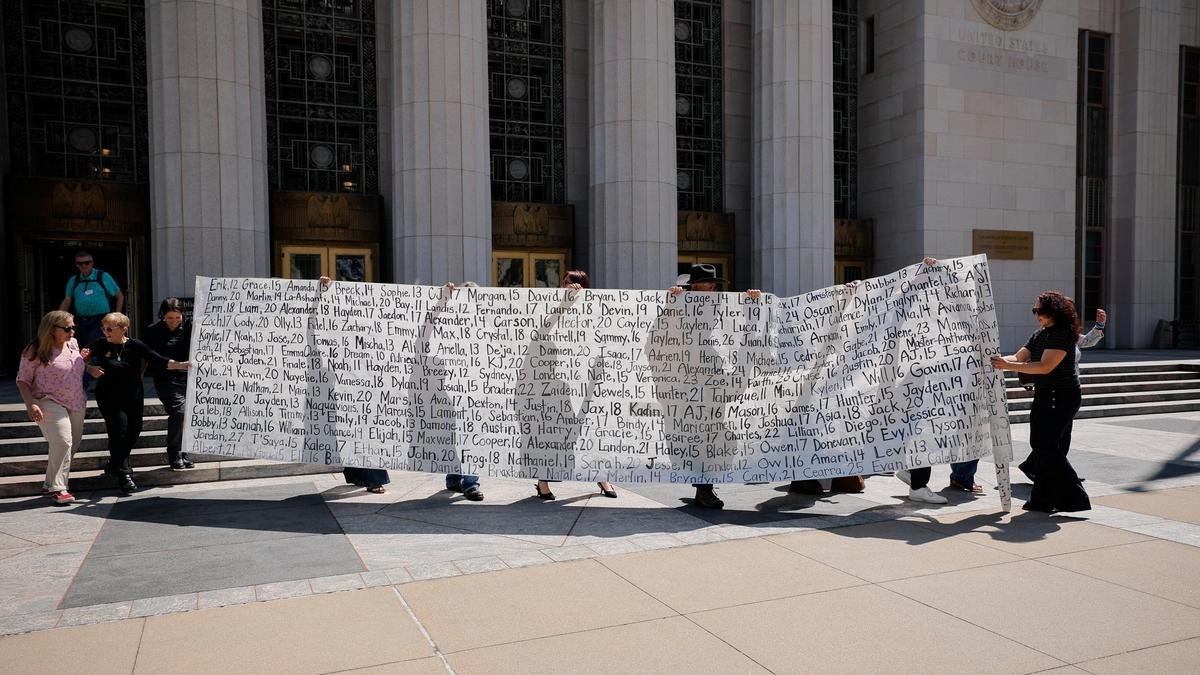

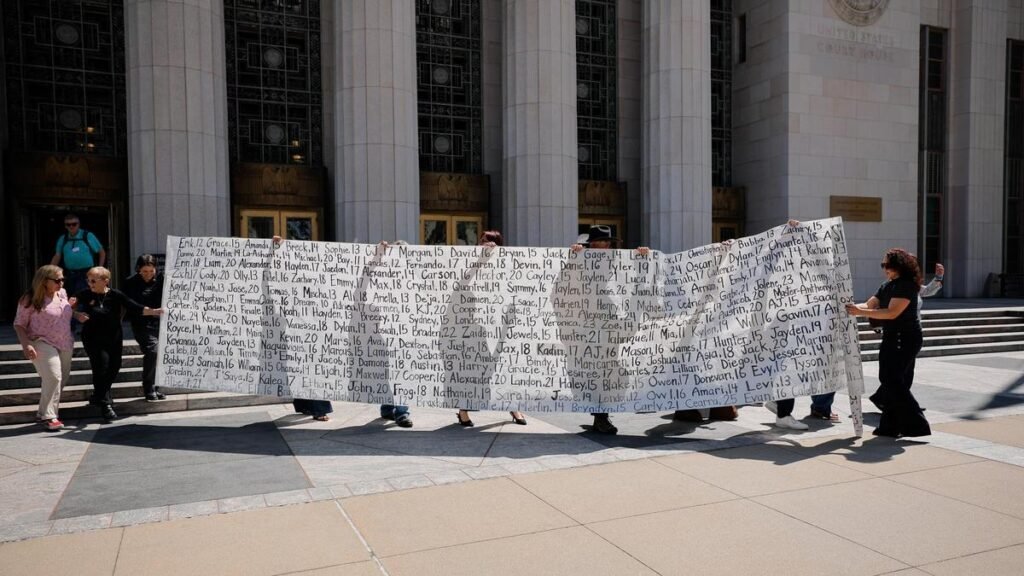

#### Mental Health and Youth Protection

A newer area of litigation involves the impact of social media especially on young users. Lawsuits claim that:

* Social media platforms are designed to be addictive

* Algorithms amplify content

* Companies ignore research on mental health risks

### Consequences of These Verdicts

The verdicts against social media companies have triggered wide-ranging consequences:

* **Financial Penalties**: Fines running into billions of dollars have become common. While large companies can absorb these costs repeated penalties affect investor confidence and long-term strategy.

* **Policy Changes**: Social media companies have been forced to improve transparency strengthen content moderation and provide users with control over data.

* **Increased Regulation**: Governments are introducing laws to regulate digital platforms. This includes data protection laws, content accountability rules and platform liability frameworks.

* **Shift in Public Perception**: Public trust in media has declined. Users are becoming more aware of privacy risks and manipulation leading to calls for tech practices.

### The Core Legal Debate: Platform vs Publisher

One of the critical unresolved questions is whether social media companies should be treated as:

* Platforms ( intermediaries)

* Publishers (responsible for content)

Traditionally companies like Facebook and Twitter have argued they are platforms meaning they are not liable for user-generated content. This protection is central to laws like Section 230 in the United States.

However critics argue that:

* Algorithms actively. Promote content

* Companies profit from engagement, harmful content

* Moderation decisions show editorial control

Courts have yet to establish a consistent standard leading to conflicting rulings across jurisdictions.

### Challenges in Regulating Social Media

Despite victories against tech giants regulating social media remains extremely complex:

* **Global Nature of Platforms**: Social media operates across borders. Laws are national. A ruling in one country may not apply elsewhere creating enforcement challenges.

* **Balancing Free Speech**: Governments must balance preventing content and protecting freedom of expression. Over-regulation risks censorship, while under-regulation allows abuse.

* **Technological Complexity**: Algorithms are highly complex and often opaque. Even regulators struggle to understand how content is ranked and distributed.

* **Rapid Innovation**: Technology evolves faster than systems. By the time laws are implemented social media platforms may have already changed their features.

### Industry Response

Social media companies have responded to pressure in several ways:

* **Increased Transparency**: Platforms now publish transparency reports, content moderation statistics and algorithm explanations (to some extent).

* **Investment in Safety**: Companies are investing heavily in AI moderation tools, human content reviewers and fact-checking partnerships.

* ** Lobbying Efforts**: Tech companies actively engage with policymakers to shape regulations and protect their business models.

### The Role of Users and Society

While companies and governments play roles users are also part of the equation:

* Users generate and share content

* Public demand influences platform policies

* Awareness can reduce misinformation spread

Digital literacy is becoming essential in navigating media responsibly.

### Unanswered Questions

Despite progress several key questions remain:

* **Who is Ultimately Responsible?**: Should responsibility lie with the social media platform, the user or the algorithm designers? There is no consensus.

* **Can Algorithms Be Regulated?**: Regulating algorithms raises concerns about transparency vs trade secrets, bias and fairness and government overreach.

* **Will Big Tech Be Broken Up?**: Antitrust cases could reshape the industry. Breaking up companies may not necessarily solve underlying issues.

* **Is Self-Regulation Enough?**: Companies claim they can regulate themselves. Critics argue external oversight is necessary.

### Global Trends

Different regions are taking approaches:

* **European Union**: regulations like GDPR and the Digital Services Act

* **United States**: Ongoing debates over Section 230 and antitrust laws

* **India**: Increasing focus on content regulation and data localization

This fragmented approach creates challenges for platforms trying to comply with multiple legal systems.

### The Future of Social Media Regulation

Looking ahead several trends are likely:

* **Stricter Laws**: Governments will continue introducing laws targeting data privacy, platform accountability and AI transparency.

* **Greater Accountability**: Courts may increasingly hold media companies responsible for algorithmic decisions and content amplification.

* **Rise of Decentralized Platforms**: New models of media may emerge, reducing centralized control and potentially avoiding some regulatory issues.

* **Ethical Technology Movement**: There is growing demand for design user well-being and responsible innovation.

Verdicts against social media companies represent a shift in the digital landscape. They signal that governments and courts are no longer willing to allow tech giants to operate without accountability. These rulings have already led to penalties, policy changes and increased regulation.

However the path forward is far from clear. Fundamental questions about responsibility, free speech, algorithmic control and global governance remain unresolved. As technology continues to evolve too must the legal and ethical frameworks that govern it.

The challenge lies in striking the balance—ensuring that social media remains a space, for innovation and expression while protecting users from harm. The decisions made in the coming years will shape not the future of social media but also the broader digital society.