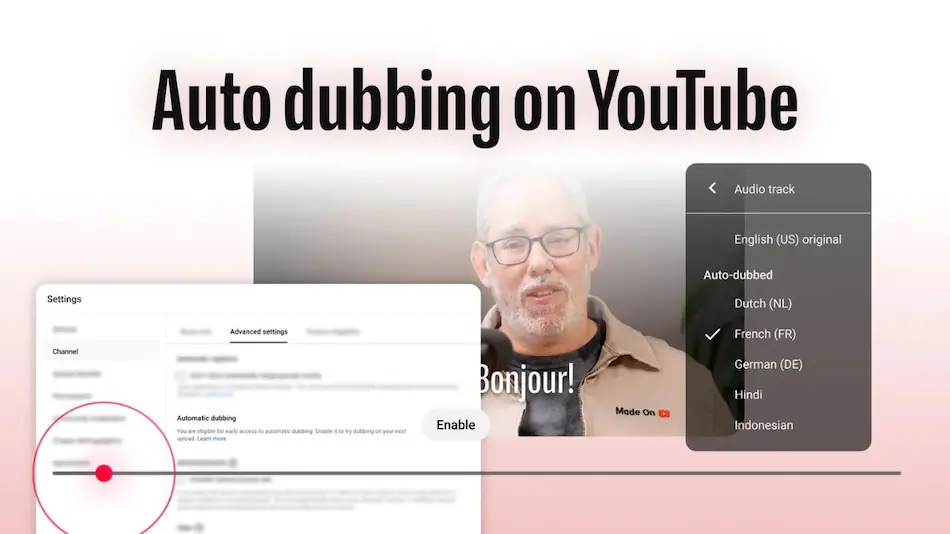

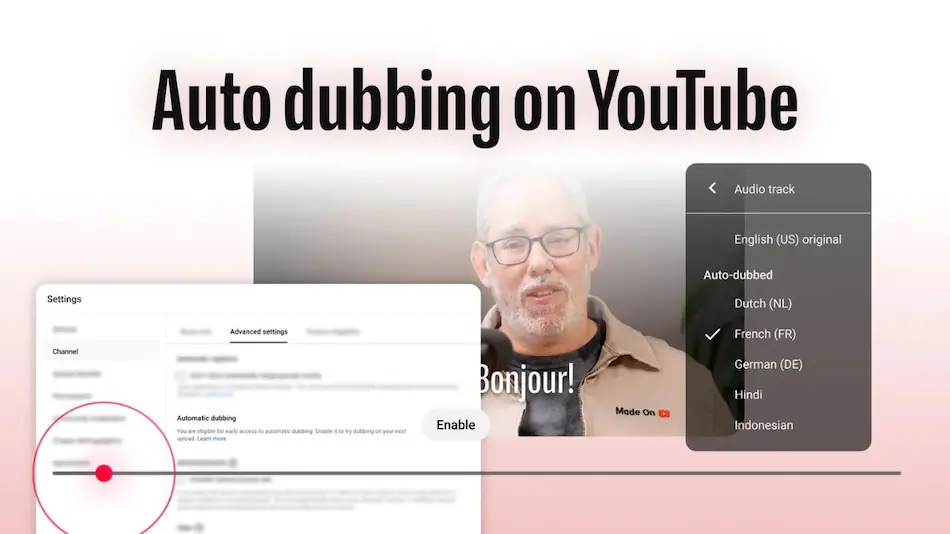

YouTube has made a feature available to everyone. This feature is called auto-dubbing. It uses artificial intelligence. It can now be used with 27 languages. The people at YouTube have also made some changes to make the dubbed audio sound better.

They have added something called “Expressive Speech” that helps keep the tone of the voice. Viewers can now choose what language they want to hear. Creators can also review the audio before it is released.

The goal of all these changes is to help people who speak languages watch the same videos. This way creators can reach people, over the world. However YouTube also has to think about some problems. For example they have to make sure the audio is accurate and sounds like the person. They also have to figure out how people will find these videos. YouTube and its auto-dubbing feature can be very helpful. They also have to be careful.

What auto-dubbing actually is

Automatic dubbing or auto-dubbing makes it possible for people to listen to videos in their language. This is really helpful because you do not have to read subtitles to understand what is going on. Automatic dubbing does a few things, for a video.

* It generates tracks that have been translated into a different language.

The system that does this is pretty cool. It usually does these things for the video.

Transcribes the original spoken audio to text.

The person wants to translate that transcript into another language. They need to change the words, in the transcript so that people who speak a language can understand it. The goal is to take that transcript and make it into another language.

This thing takes an audio track and makes a new one in a different language. It uses models that try to sound like the original speaker, with the same feeling and timing. So you get an audio track that goes with the same video. Now people can pick which audio they like the original one or the new one, in a different language.

So what is different, in this rollout I want to know the main points that stand out the headline details of this rollout.

YouTube is making auto-dubbing available to all YouTube users and creators. It is not just for a test group or special partner program channels anymore. So everyone who uploads videos that’re not music videos should now see the auto-dubbing options when they use YouTube Studio. This is a change for auto-dubbing, on YouTube.

YouTube is now available in 27 languages. This is an increase from the 8 languages they used to have for the “Expressive Speech” option. YouTube expanded its language coverage to 27 languages. This means YouTube can now reach people in different parts of the world. It is good for people who make videos on YouTube and for people who watch videos on YouTube. The expansion of YouTube, to 27 languages unlocks regional markets for YouTube creators and YouTube viewers.

YouTube has introduced something called Expressive Speech. This is a way of speaking that tries to keep the feeling and tone of the person who made the video. YouTube also made it easier for creators to check and control their videos in YouTube Studio.. Youtube is trying out a new feature that uses artificial intelligence to make the lips of people, in videos move in time with the audio even if the audio is not the original sound. This feature is called AI lip-sync. It is supposed to make dubbed audio look more natural. YouTube is testing this feature to see how it works.

Viewer features now include a setting called Preferred Language. This setting makes it easy for you to choose between dubbed audio and the original audio. It also makes it clear which audio tracks are dubbed automatically.

I found some information from a lot of news articles and YouTubes help page. I got all the details, from these sources. You can check them out for yourself at the end where I put the links.

The short history / context — why this matters now

YouTube has been trying out dubbing and multilingual audio tools for a pretty long time now. They started with a few channels and languages but they kept making it better as their speech and translation tools got smarter. Now they are making it available, to people after testing it for months. This happened after they talked about -language audio a lot in 2024 and 2025. YouTube is doing this because of two things that are happening:

A lot of people watch videos in languages that’re different, from the language the video was originally made in. So when you add audio in the language that people understand more people will watch your video. They will watch it for a longer time. This means that localized audio can help your video reach people and they will spend more time watching it.

The technology for making voices sound real like the stuff Google is doing with intelligence is getting a lot better. This means that fake voices in movies and things can sound like real people. They are trying to make these voices sound like they have feelings of just sounding like robots. This is a deal for synthetic dubs because they can sound more convincing when they have emotional tone. The people making these systems are really trying to make the voices sound like they are feeling something than just being flat and robotic. This is what makes synthetic dubs sound more real. It is all thanks to the advances in technology, like the work that Google is doing.

Let us take a look at the features. We will go through each piece and see what it does and why the new piece matters.

The new pieces are important because they do things. Each new piece has its job and that is why the new pieces matter.

We will look at each piece one, by one to understand what each new piece does. This way we can see why each new piece is important and why the new piece matters to us.

They have made the Expressive Speech bigger. Now it is available in 27 different languages like Expressive Speech. The Expressive Speech now supports a lot of languages it is 27 languages, for Expressive Speech.

This thing is a TTS mode that wants to keep the feeling of the creator the way they talk and the energy they have instead of sounding like a robot. The TTS mode is supposed to sound like the creator with the emotion, cadence and energy that they have. The goal of the TTS mode is to make the speech sound natural like the creator is actually talking, not like a machine that is just reading words. The TTS mode is, about keeping the creators emotion, cadence and energy in the speech.

Why it matters: automated dubs often sounded really bad and broke the emotional connection with the people watching. The Text To Speech or TTS for short was not very good. Now we have Text To Speech that reduces that gap and makes the content feel more authentic when it is in different languages. This is because the expressive Text To Speech sounds like a real person and that makes the content feel more real to the people watching it. The Text To Speech is very important because it helps people understand and feel the content when it is, in a language.

There are some things to keep in mind when using Text To Speech. Expressive Text To Speech is still not like a person. It can have trouble with changes in sound like when people from different places talk. Expressive Text To Speech can also have problems, with saying things in the way depending on the situation. Sometimes it sounds weird. Puts the stress on the wrong word. It can even get idioms wrong. This is because expressive Text To Speech is still synthetic.

I want to talk about the Creator review and the controls in YouTube Studio. The Creator review is something that YouTube Studio has. YouTube Studio has a lot of controls that the Creator review goes over.

* The Creator review looks at the controls in YouTube Studio to make sure they are working properly.

1. YouTube Studio controls are important for people who make videos on YouTube.

The Creator review helps with the YouTube Studio controls so that people can use them easily. YouTube Studio and the Creator review are connected because the Creator review checks the controls, in YouTube Studio.

YouTube creators can look at the transcript that is made for them. They can change the translations before they put them out. They get to decide which dubs they want to put out or get rid of. They can also take care of the audio tracks when they are using YouTube Studio. The creators can manage the audio tracks, in YouTube Studio. They can do all of this with the YouTube Studio.

This is important because it stops translations from being published automatically. It also makes sure that the people who create the content are always informed about what’s going on. The people in charge need to be able to control what is published because sometimes the automatic translations are wrong and that can change what the text really means or how it sounds. This is why editorial control is so critical, for translations especially when they are done automatically.

Viewer language controls and Preferred Language

People who watch videos can choose the language they like such as Hindi and YouTube will try to use audio tracks or subtitles in that language if they’re available. YouTube will also make it clear when the audio has been automatically changed to another language. This makes it easier for viewers to know what they are getting. Viewers can pick their Preferred Language and YouTube will do its best to use that language, for audio tracks or subtitles.

This is important because it gives people who speak languages a better experience when they are watching something. It also helps them find the version that’s in their language.. It stops videos from playing automatically in a language that the person does not want to hear. This is especially helpful for people who do not want to hear a version of a video, like a dub that they do not like.

Lip-sync testing (early stage)

YouTube is trying to make dubbed videos look more real. They want to make sure the mouth movements and other visual things match the audio that has been dubbed. This way YouTube videos that have been dubbed will look more natural. YouTube is experimenting with this to make dubs look better.

Why it matters: when the visual and audio parts are, in sync the dub is not as annoying. The quality seems better. But making this happen is really tough and it is still being tried out. The results will be different each time. The better visual and audio alignment makes the dub less distracting. It can really improve the perceived quality of the dub.

YouTube has shared some information. This is what they have to say about the signs that people are using their site. The data that YouTube shared shows signals of people adopting it. This data from YouTube is, about the signals of adoption.

YouTube said that a lot of people were watching auto-dubbed videos at the beginning. In December than 6 million YouTube users watched these videos for at least 10 minutes every day. This shows that YouTube users like machine-generated dubs and that they really want to use this feature. The fact that many YouTube users were watching auto-dubbed content is one of the reasons YouTube decided to make it available, to more people.

Benefits. This is about who gets them and how they get them. The people who gain benefits are the ones we should think about. Benefits are things that people get. We need to know who gets these benefits and how they get them. This is important because benefits can make a difference, in peoples lives. So we have to look at who gets benefits and how they get them. Benefits are things that help people. We want to know who gets helped and how they get helped by these benefits.

Creators

When you upload something it can reach people who speak different languages. You do not have to record the audio or upload it to many different channels. This means that one upload can reach speakers of languages.

This helps small creators save money and time. They do not have to pay for voice actors. Small creators can still make videos that people, around the world can watch. The videos made by creators can reach global viewers.

If a video gets viewers people will probably watch it for a longer time. This can mean the video will make money from ads and memberships.. The amount of money it makes can be different depending on where the viewers are from and what the video is about. Potential growth in watch time and revenue, from videos is a deal because more viewers can lead to longer watch sessions and higher ad and membership revenue for videos.

Viewers

Accessibility is important, for viewers who have a time reading subtitles. This happens when they are doing things at the same time or watching something on a small screen. In these cases viewers can listen to audio in their language, which is their native language. This makes it easier for them to understand what is going on. They can enjoy the audio in their native language.

The Preferred Language control is a feature that allows users to choose if they want to listen to the audio or if they want to use a dubbed track. This means that users have the option to select the Preferred Language they like, which’s really useful. The Preferred Language control gives users this choice so they can pick what they like best either the audio or the dubbed track of the Preferred Language.

Platforms & advertisers

Having an inventory is a good thing. This means we can offer languages. When we have languages it can really help with ad targeting. It can also help with fill rates. This is especially true in markets that do not have a lot of options. These are the served markets. More languages can make a difference, in these areas.

Advertisers can try out ideas for advertisements in various places and see what works best and they can do this kind of testing for Localized creative testing much faster. This means that Localized creative testing allows advertisers to test creative testing, in different regions and get the results quickly.

Risks, limitations & ethical concerns

No Artificial Intelligence feature is completely safe. You have to be careful, with Artificial Intelligence.

Translation accuracy and nuance

Translating things from one language to another can be really tough when it comes to things like idioms or references to a culture. It can also be hard to get sarcasm.. Then there are special terms that are only used in certain fields. If something gets translated wrong it can be pretty embarrassing. It can give people the wrong idea. This is especially true for things, like news stories, medical information or legal documents. Having the person who created the content review the translation can help reduce the chance of mistakes. It does not completely get rid of the risk of machine translation errors. Creator review of machine translation. Does not eliminate the risk of machine translation.

Voice likeness and consent

So the idea of Expressive Speech is that it tries to sound like the person who made it. That makes you wonder about Expressive Speech and what it can really do. What is the point of Speech trying to copy the creators voice style in the first place?

People who make things, like music or videos do not always say it is okay to make copies of their voice in other languages. The creators of the work might not agree to someone else making a synthetic version of their voice sound like it is speaking a different language. This is something that the creators of the music or videos should have a say in because it is their voice that is being used to make these reproductions of their voice in other languages.

The system could be used by people to make fake audio that sounds like someone else. This fake audio could be used to impersonate the person. Bad actors might try to use the system in a way to create this mimicked audio for impersonation. They could make it sound like the real person is saying something that they are not really saying. The system is at risk of being used by actors to create mimicked audio, for impersonation.

YouTube has things in place to help creators control and label their videos. The rules and guidelines for using voice cloning are still being figured out. This is because the whole idea of voice cloning is pretty new and people are still trying to decide what is okay and what is not okay when it comes to using someones voice without their permission. YouTubes creator controls and labeling help but the laws and ethics, around voice cloning are still evolving.

Cultural tone and localization

Literal translations might keep the words the same. They do not always sound right in a different culture. Good localization is when you make something sound natural in a place. Sometimes this means you have to say things in a way not just translate the words directly. Computers and machines that translate things on their own are not very good, at doing this.

Monetization & creative jobs

More machines doing the work could mean that we need people to do the dubbing in some areas. On the hand this can also create new jobs for local editors people who review things and cultural consultants. This is because machines are not good, at understanding things like people are. So local editors and cultural consultants will still be needed to make sure things are done correctly for the people.

Discoverability & platform dynamics

Algorithms like to show people automatically dubbed content in some places. This can change the way content gets around. Creators need to keep an eye on their analytics to see how dubs impact the number of people who see their stuff and how much people interact with it. They should check to see how the dubs affect the reach of their content and the engagement, with their content.

How to use it — a practical creator walkthrough

If you want to know the steps you should check the help page for YouTube Studio because the user interface might be a little different. YouTube Studio is where you need to go for the up, to date information.

Upload your video to YouTube as usual.

To start you need to go to YouTube Studio and open the video you want to work on. Then you have to find the part that says Translations or Audio or where it talks about Auto-dubbing. YouTube Studio does something it puts a note in the description when a video has an auto-dubbed track so you can easily see if one is already there, for the Translations or Audio of your video.

You need to choose the languages you want to use for dubbing. There are 27 languages to pick from in the list, for auto-dubbing. The list has 27 languages that you can use for auto-dubbing.

Preview the auto-generated transcript and translation — edit if required.

Choose whether to publish the auto-dubbed audio track or keep it as a draft.

If Expressive Speech is available you should select it for a natural sounding Text To Speech and try out the lip-sync options if they are, in your area or test group.

To get an idea of what people like we should monitor analytics for each dubbed track. We need to check things like how people watch if they stay until the end and where the people watching are from. This will help us figure out what works for the dubbed tracks and what does not work. We should look at the watch time for each dubbed track and also the retention which’s how well we can keep people watching. The geographic metrics for each dubbed track are also important because they tell us where the people who like our dubbed tracks are, from.

Tips & best practices for creators

Always review the translated script before publishing — especially for content with technical terms or sensitive material.

It is an idea to keep a list of terms or brand names. This list will make it easier to edit things in languages at the same time. You can use this list to make bulk edits easier. The list of brand names will be very helpful.

I want to try something with my music. So I will publish one song that has a voice and see how people like it compared to the original song. Then I will compare how many people are listening to each one. If the new version is more popular I will adjust my settings to make songs, like that. I will do this with my A/B test.

Consider manual voiceovers for signature content (e.g., a flagship series) to preserve high production value.

Labeling and community transparency are very important. We need to make it clear in the description which tracksre auto-dubbed. This way people know what they are listening to. We should also ask people who listen to these tracks to give us their feedback. This is especially important for audiences. They can tell us if there is any phrasing that needs to be fixed. We want to make sure that auto-dubbed tracks are good and that people, like listening to them. So we need to hear from audiences and fix any problems with the tracks.

So I was wondering how this method of localization compares to methods of localization that are out there. What are the main differences, between this localization method and other localization methods. I want to know how this localization method stacks up against localization methods in terms of effectiveness and ease of use. Are there any advantages that this localization method has over localization methods.

Subtitles: cheapest and fastest but require reading; good for dense info.

Manual dubbing (human voice actors): highest naturalness and cultural fit, but costly and time-consuming.

YouTube has a system called auto-dubbing. This is, like a ground. It is fast. Does not cost a lot of money. The auto-dubbing system is getting better at sounding natural because of something called Expressive Speech. YouTubes auto-dubbing system is the choice when you want to reach a lot of people but you do not have a lot of money to spend.

When you are working on something important it is usually best to use a combination of automated drafting and human editing. This way you get the benefits of both the Hybrid approach, which’s automated draft and human post-editing and the Hybrid approach can help you produce high quality content. The Hybrid approach of automated draft and human post-editing is often the way to go for high-stakes content like the Hybrid approach.

Potential downstream effects on the creator economy

The internet is making it possible for people who make things to be seen by a lot of people. This means that people who create niche things from places can now show their work to a wider audience. Niche creators, from markets can now access wider audiences and get their work out there.

Shifts in skills demand: more need for localization editors and multilingual community managers.

New creative formats are coming out. Creators might make content with the idea that it will be dubbed later. For example they will try to avoid putting text on the screen that is not translated into languages. This way the content can be used in different places. New creative formats like this can be really useful for creators who want to make content that people, around the world can enjoy.

Monetization patterns are really important. Localized audio can be a deal. It could help people make money from ads in places where they do not speak English.. The people who make this content should pay attention to what happens after they make these changes. They should check if people are watching their videos for longer or if they are getting money for each ad. This will help them see if localized audio is actually working for them.

I think the place where it will likely get better next is something that people’re really interested, in. The thing that will likely improve next is probably going to be a deal. People want to know where the thing that will likely improve next will actually happen.

Better lip-sync and mouth-movement alignment as testing matures.

More languages and dialects, including regionally tailored variants.

People help make things better by working on translations. They do this by making changes that everyone can see. These changes are, like edits that a crowd of people do together. The crowd edits help with translations.

Stronger guardrails for voice cloning consent and authenticity labeling.

Tighter integration with creator tools (e.g., thumbnails and metadata localized per language).

Quick Q&A (common questions)

I want to know if music is allowed in auto-dubs. Are auto-dubs supposed to have music in them or not? I am talking about auto-dubs so I need to know the rules for auto-dubs. Do auto-dubs usually include music. Is that not the case, with auto-dubs?

YouTubes auto dubbing is mostly for words, not for music. This is because music has its set of rules. Music rights are a different thing. You should always look at the copyright rules for YouTube. YouTubes auto dubbing is for content like people talking, not for music. Always remember to check the copyright policies, for YouTube.

People often wonder if auto-dubbing will create copyright problems or issues with impersonation. The thing is auto-dubbing is a process where a computer generates a voice that sounds like someone. This can be a person or a character from a movie.

So when we talk about auto-dubbing we are talking about using someones voice without them saying the words. This is where the problem comes in. The main issue with auto-dubbing is that it can be used to make people think that someone said something they did not actually say.

For example imagine someone uses auto-dubbing to make it sound like a famous person is saying something about another person. This can be very hurtful. It is also not true.

The people who make auto-dubbing software have to be very careful. They have to make sure that their software is not used to do things.

Auto-dubbing can also create copyright issues. This happens when someone uses auto-dubbing to make money from a voice that’s not their own.

We have to think about how auto-dubbing can affect the people whose voices are being used. We have to make sure that auto-dubbing is used in a way that’s fair and respectful.

The companies that make auto-dubbing software have to get permission from the people whose voices they are using. This is very important.

If they do not get permission they could be, in trouble.

So to answer the question yes auto-dubbing can create copyright or impersonation issues if it is not used carefully.

A: It is possible. If someone makes a video with a voice that sounds like a person in a different language it could cause problems if people complain. YouTube has some tools and labels to help with this. The rules are still being figured out for this kind of thing. YouTube has things like controls and labels to help. The legal rules, for using voices like this are still being developed for YouTube and other places.

People who watch videos often wonder if they can turn off the auto-dubbed audio. The answer is yes viewers can turn off auto-dubbed audio. This is something that the people who make the videos should let the viewers do because not everyone likes to listen to auto-dubbed audio. Viewers should be able to choose if they want to listen to the audio or the auto-dubbed audio. So to answer the question yes viewers can turn off auto-dubbed audio if they want to.

A: Yes. The thing that lets you control what you are watching and the setting, for the language you like let users pick if they want to hear the voices or a different version where they have it.

Auto-dubbing is a powerful, maturing tool that can meaningfully expand reach with little incremental cost. For most creators it’s worth experimenting — especially in regions where your analytics show latent interest — but always review translations and treat auto dubs as one tool among subtitles and human voiceovers. Use analytics to validate whether a particular dubbed language genuinely grows engagement or revenue before relying on it for core content strategy.